The Intelligent Power Conditioner (power inverter-converter) is based on power control technology that Sharp has cultivated over the course of developing power "conditioners" for photovoltaic power generation systems. With the new unit, solar cells and storage batteries can be operated in conjunction with power from the regular electrical grid to provide a stable electricity supply. In anticipation of wider use of direct current (DC) home appliances, the unit can also supply DC electricity. As electric vehicles are expected to grow in popularity in the future, and Sharp's technology is significant as it enables an additional use of the EV batteries. In its proof-of-concept trials, the company succeeded in supplying eight kilowatts (kW) of power -- enough to cover the use of an average household -- from the battery pack of a commercially available EV. In addition, the unit can charge four kilowatt-hours (kWh) of electricity in the EV's storage battery in about 30 minutes. Read more from Japan for Sustainability link from David Schaller

The Intelligent Power Conditioner (power inverter-converter) is based on power control technology that Sharp has cultivated over the course of developing power "conditioners" for photovoltaic power generation systems. With the new unit, solar cells and storage batteries can be operated in conjunction with power from the regular electrical grid to provide a stable electricity supply. In anticipation of wider use of direct current (DC) home appliances, the unit can also supply DC electricity. As electric vehicles are expected to grow in popularity in the future, and Sharp's technology is significant as it enables an additional use of the EV batteries. In its proof-of-concept trials, the company succeeded in supplying eight kilowatts (kW) of power -- enough to cover the use of an average household -- from the battery pack of a commercially available EV. In addition, the unit can charge four kilowatt-hours (kWh) of electricity in the EV's storage battery in about 30 minutes. Read more from Japan for Sustainability link from David Schaller Jun 30, 2011

“Power Inverter-Converter” Lets EV Batteries Supply Homes

The Intelligent Power Conditioner (power inverter-converter) is based on power control technology that Sharp has cultivated over the course of developing power "conditioners" for photovoltaic power generation systems. With the new unit, solar cells and storage batteries can be operated in conjunction with power from the regular electrical grid to provide a stable electricity supply. In anticipation of wider use of direct current (DC) home appliances, the unit can also supply DC electricity. As electric vehicles are expected to grow in popularity in the future, and Sharp's technology is significant as it enables an additional use of the EV batteries. In its proof-of-concept trials, the company succeeded in supplying eight kilowatts (kW) of power -- enough to cover the use of an average household -- from the battery pack of a commercially available EV. In addition, the unit can charge four kilowatt-hours (kWh) of electricity in the EV's storage battery in about 30 minutes. Read more from Japan for Sustainability link from David Schaller

The Intelligent Power Conditioner (power inverter-converter) is based on power control technology that Sharp has cultivated over the course of developing power "conditioners" for photovoltaic power generation systems. With the new unit, solar cells and storage batteries can be operated in conjunction with power from the regular electrical grid to provide a stable electricity supply. In anticipation of wider use of direct current (DC) home appliances, the unit can also supply DC electricity. As electric vehicles are expected to grow in popularity in the future, and Sharp's technology is significant as it enables an additional use of the EV batteries. In its proof-of-concept trials, the company succeeded in supplying eight kilowatts (kW) of power -- enough to cover the use of an average household -- from the battery pack of a commercially available EV. In addition, the unit can charge four kilowatt-hours (kWh) of electricity in the EV's storage battery in about 30 minutes. Read more from Japan for Sustainability link from David Schaller Wastewater Plant Aims to Achieve and Exceed Energy Independence.

The city’s initial goal for the 20-million-gallons-per-day (mgd) facility is to have it achieve energy independence by 2015. The City is using a cogeneration engine that converts methane gas from the WWTP’s digesters, into energy for electrical power and heat. The methane-to-energy solution has helped Gresham WWTP cut its electricity costs by about $23,000 per month, and today the facility produces half of the energy it consumes. Further fueling the plant is a 419-kW solar array, expected to supply 7% of the WWTP’s yearly demand. The facility also acquires wind power from in-state wind farms via Portland General Electric’s Clean Wind Program, generating another 18% of Gresham WWTP’s energy. The facility currently produces about 60% of its power on site. A new fats, oils and grease receiving station will increase digester gas production, and a second co-generator will use this gas to fill the plant’s energy gap. Furthermore, plans are in place to install high-efficiency aeration blowers and to swap out the current digester mixing system for more energy-efficient technology. Ultimately, the facility plans not only to produce enough energy for its own needs but also surplus energy that can be added to the grid for other local needs. Read more at Water and Wastes Digest

EPA Improves Access to Information on Chemicals

EPA is releasing two databases—the Toxicity Forecaster database (ToxCastDB) and a database of chemical exposure studies (ExpoCastDB)—that scientists and the public can use to access chemical toxicity and exposure data.

"Chemical safety is a major priority of EPA and its research," said Dr. Paul Anastas, assistant administrator of EPA's Office of Research and Development. "These databases provide the public access to chemical information, data and results that we can use to make better-informed and timelier decisions about chemicals to better protect people's health." ToxCastDB users can search and download data from over 500 rapid chemical tests conducted on more than 300 environmental chemicals. ToxCast uses advanced scientific tools to predict the potential toxicity of chemicals and to provide a cost-effective approach to prioritizing which chemicals of the thousands in use require further testing. ToxCast is currently screening 700 additional chemicals, and the data will be available in 2012. ExpoCastDB consolidates human exposure data from studies that have collected chemical measurements from homes and child care centers. Data include the amounts of chemicals found in food, drinking water, air, dust, indoor surfaces, and urine. ExpoCastDB users can obtain summary statistics of exposure data and download datasets. EPA will continue to add internal and external chemical exposure data and advanced user interface features to ExpoCastDB. The new databases link together two important pieces of chemical research—exposure and toxicity data—both of which are required when considering potential risks posed by chemicals. The databases are connected through EPA's Aggregated Computational Toxicology Resource (ACToR), an online data warehouse that collects data on over 500,000 chemicals from over 500 public sources.

"Chemical safety is a major priority of EPA and its research," said Dr. Paul Anastas, assistant administrator of EPA's Office of Research and Development. "These databases provide the public access to chemical information, data and results that we can use to make better-informed and timelier decisions about chemicals to better protect people's health." ToxCastDB users can search and download data from over 500 rapid chemical tests conducted on more than 300 environmental chemicals. ToxCast uses advanced scientific tools to predict the potential toxicity of chemicals and to provide a cost-effective approach to prioritizing which chemicals of the thousands in use require further testing. ToxCast is currently screening 700 additional chemicals, and the data will be available in 2012. ExpoCastDB consolidates human exposure data from studies that have collected chemical measurements from homes and child care centers. Data include the amounts of chemicals found in food, drinking water, air, dust, indoor surfaces, and urine. ExpoCastDB users can obtain summary statistics of exposure data and download datasets. EPA will continue to add internal and external chemical exposure data and advanced user interface features to ExpoCastDB. The new databases link together two important pieces of chemical research—exposure and toxicity data—both of which are required when considering potential risks posed by chemicals. The databases are connected through EPA's Aggregated Computational Toxicology Resource (ACToR), an online data warehouse that collects data on over 500,000 chemicals from over 500 public sources.Jun 29, 2011

Love the Smell of DDT in the mornin

In the 1940s and ’50s dichlorodiphenyltrichloroethane, a synthetic pesticide better known as DDT, was used to kill bugs that spread malaria and typhus in several parts of the world. DDT was argued to be toxic to humans and the environment in the famous environmental opus, Silent Spring. It was banned by the U.S. government in 1972.

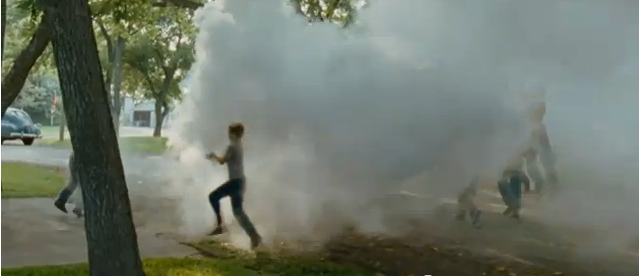

Before all that, though, it was sprayed in American neighborhoods to suppress insect populations. The new movie Tree of Life has a great scene re-enacting the way that children would frolick in the spray as the DDT trucks went by. Here are two screen shots from the trailer:

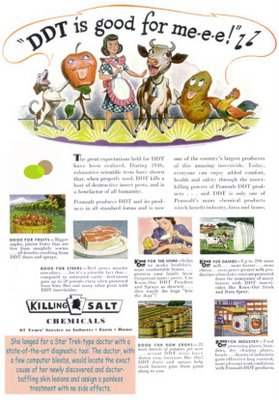

The scene reminded me of an old post we’d written, below, featuring advertisements for the pesticide, one with the ironic slogan “DDT is good for Me-e-e!”

“The great expectations held for DDT have been realized. During 1946, exhaustive scientific tests have shown that, when properly used, DDT kills a host of destructive insect pests, and is a benefactor of all humanity.

Pennsalt produces DDT and its products in all standard forms and is now one of the country’s largest producers of this amazing insecticide. Today, everyone can enjoy added comfort, health and safety through the insect-killing powers of Pennsalt DDT products . . . and DDT is only one of Pennsalt’s many chemical products which benefit industry, farm and home.

See more from Sociological Images by Lisa Wade

Researchers discover source for generating 'green' electricity

Researchers say the material could potentially be used to capture waste heat from a car's exhaust that would heat the material and produce electricity for charging the battery in a hybrid car. Other possible future uses include capturing rejected heat from industrial and power plants or temperature differences in the ocean to create electricity. The research team is looking into possible commercialization of the technology.

"This research is very promising because it presents an entirely new method for energy conversion that's never been done before," said University of Minnesota aerospace engineering and mechanics professor Richard James, who led the research team."It's also the ultimate 'green' way to create electricity because it uses waste heat to create electricity with no carbon dioxide." To create the material, the research team combined elements at the atomic level to create a new multiferroic alloy, Ni45Co5Mn40Sn10. Multiferroic materials combine unusual elastic, magnetic and electric properties. The alloy Ni45Co5Mn40Sn10 achieves multiferroism by undergoing a highly reversible phase transformation where one solid turns into another solid. During this phase transformation the alloy undergoes changes in its magnetic properties that are exploited in the energy conversion device. During a small-scale demonstration in a University of Minnesota lab, the new material created by the researchers begins as a non-magnetic material, then suddenly becomes strongly magnetic when the temperature is raised a small amount. When this happens, the material absorbs heat and spontaneously produces electricity in a surrounding coil. Some of this heat energy is lost in a process called hysteresis...a thin film of the material that could be used, for example, to convert some of the waste heat from computers into electricity. "This research crosses all boundaries of science and engineering," James said. "It includes engineering, physics, materials, chemistry, mathematics and more. It has required all of us within the university's College of Science and Engineering to work together to think in new ways."

"This research is very promising because it presents an entirely new method for energy conversion that's never been done before," said University of Minnesota aerospace engineering and mechanics professor Richard James, who led the research team."It's also the ultimate 'green' way to create electricity because it uses waste heat to create electricity with no carbon dioxide." To create the material, the research team combined elements at the atomic level to create a new multiferroic alloy, Ni45Co5Mn40Sn10. Multiferroic materials combine unusual elastic, magnetic and electric properties. The alloy Ni45Co5Mn40Sn10 achieves multiferroism by undergoing a highly reversible phase transformation where one solid turns into another solid. During this phase transformation the alloy undergoes changes in its magnetic properties that are exploited in the energy conversion device. During a small-scale demonstration in a University of Minnesota lab, the new material created by the researchers begins as a non-magnetic material, then suddenly becomes strongly magnetic when the temperature is raised a small amount. When this happens, the material absorbs heat and spontaneously produces electricity in a surrounding coil. Some of this heat energy is lost in a process called hysteresis...a thin film of the material that could be used, for example, to convert some of the waste heat from computers into electricity. "This research crosses all boundaries of science and engineering," James said. "It includes engineering, physics, materials, chemistry, mathematics and more. It has required all of us within the university's College of Science and Engineering to work together to think in new ways."Read full at PhysOrg

Japan Feeding Food Waste-Derived Biogas into City Grid

The raw biogas (methane: 60%; carbon dioxide and other gases: 40%) is produced from food residue at the methane fermentation plant of Bio Energy in Ota Ward, Tokyo, the largest food residue methane fermentation plant in Japan.

Read more from Japan for SustainabilityDiet Soda increases weight and risk of diabetes.

Two new studies found that diet drinks and artificial sweeteners increase people's waistlines and increase their risk of diabetes.

From ScienceDaily:

Measures of height, weight, waist circumference and diet soda intake were recorded at SALSA enrollment and at three follow-up exams that took place over the next decade. The average follow-up time was 9.5 years... Diet soft drink users, as a group, experienced 70 percent greater increases in waist circumference compared with non-users. Frequent users, who said they consumed two or more diet sodas a day, experienced waist circumference increases that were 500 percent greater than those of non-users.

Abdominal fat is a risk factor for several conditions, including diabetes, heart disease, and cancer. The researchers say this finding shows that national campaigns against sugary drinks should emphasize that replacing them with diet soft drinks won't necessarily make you healthier.

The report doesn't weigh in on whether a raging Diet Coke addition is preferable to a regular Coke addiction, but it goes on to note that the artificial sweetener aspartame is also bad news if you're concerned about diabetes. In a related study, a group of mice were fed a high-fat diet including the chemical for three moths. Compared to the control group, the aspartame-consuming mice had elevated fasting glucose levels and equal or diminished insulin levels. Co-author Dr. Gabriel Fernandes explains:

"These results suggest that heavy aspartame exposure might potentially directly contribute to increased blood glucose levels, and thus contribute to the associations observed between diet soda consumption and the risk of diabetes in humans."

Commercialize Low-Temperature Waste Heat Recovery System

Da Vinci Co., a start-up company specializing in heat control technologies in Nara Prefecture, Japan., has been developing a system that recovers low-temperature waste heat and generates electricity using a rotary heat engine (RHE). The utilization of low-temperature waste heat of 80 to 200 degrees Celsius has long been considered technologically unfeasible. The company intends to complete a 30-kilowatt system by May 2011, start production around December after an durability test, and commence sales in March 2012.

Da Vinci's RHE is an external-combustion type, Wankel-based rotary heat engine driven by the Rankine cycle. Da Vinci has developed RHEs jointly with the University of Tokyo, completing a 500-watt model in June 2009.

The RHE consists of a rotor, power generator, evaporator, condenser, and working fluid. External waste heat applied to the engine vaporizes the working fluid in the evaporator, which rotates the rotor, generating electricity with a directly-coupled generator. Gas that comes out of the rotor turns back into fluid in the condenser, from which point it returns to the evaporator.

Different working fluids including water, ethanol, and ammonia can be chosen for different waste heat temperatures. The system harnesses temperature differentials near the boiling point of the working fluid to effectively recycle low-temperature waste heat, and can be applied to generate power from waste heat recovered in factories, co-generation systems, and boilers. Read more from Japan for Sustainability

Jun 28, 2011

Government fix the budget better by doing nothing

Old people dying from side effects of commonly used drugs

The anticholinergic drugs, regularly taken by half OAPs, help problems such as heart disease, constipation, incontinence and indigestion.

But mixing the medication, which includes anti-depressants, tranquillisers, painkillers, even eye drops could increase the risk of dying.

A study of 13,000 people found the danger increased the more they took.

Read full at Mirror News

The Energy Information Administration's perspective on shale gas

A June 27, 2011, New York Times article, “Behind Veneer, Doubt on Future of Natural Gas” focuses on the Energy Information Administration’s (EIA) consideration of shale gas. EIA was contacted by a Times reporter in advance of the story, and provided a response that described the agency’s approach to developing its shale gas projections. Those interested in EIA’s views on shale gas, which differ in significant respects from those outlined in the June 27 article, may want to review the EIA response to the inquiry from the Times, the Issues in Focus discussion of shale gas included in the Annual Energy Outlook 2011, and a recent presentation on domestic and international shale gas.

A June 27, 2011, New York Times article, “Behind Veneer, Doubt on Future of Natural Gas” focuses on the Energy Information Administration’s (EIA) consideration of shale gas. EIA was contacted by a Times reporter in advance of the story, and provided a response that described the agency’s approach to developing its shale gas projections. Those interested in EIA’s views on shale gas, which differ in significant respects from those outlined in the June 27 article, may want to review the EIA response to the inquiry from the Times, the Issues in Focus discussion of shale gas included in the Annual Energy Outlook 2011, and a recent presentation on domestic and international shale gas.Hybrid cars' share of sales drop off

Jun 27, 2011

Cancer Cluster Possibly Found Among TSA Workers

Tapping strategic reserves in a non-emergency is a bad idea

An important question is what happens next. After OPEC failed this month to agree to increase production... Read more at NY Times

H.R. 1540, would reverse Military’s Clean Energy March

It's made significant efforts to wean the military services from their sole dependence on fossil fuels—particularly jet and diesel fuel made from oil—to power their planes, ships, and vehicles. Pollution from burning these fuels contributes to global warming, which, according to military leaders, is a "threat multiplier" for national security. Instead, the services are developing more efficient aviation, naval, and terrestrial heavy equipment, and various cleaner domestic advanced biofuels.*

Unfortunately the House Armed Service Committee's National Defense Authorization Act, H.R. 1540, would reverse this progress.

Section 844 of the bill would actually allow the military to use alternative fossil fuels that produce more pollution than conventional fuels. The House plans to debate H.R. 1540 over the next several days. Congress must remove this provision to enhance national security.

Read on at AmericanProgress

DVR, Cable and Satellite Boxes Waste $2 Billion of Electricity Every Year

There are approximately 160 million set-top boxes installed in US homes, or the equivalent of one box for every two Americans.

These boxes consume as much electricity each year as that consumed by the entire state of Maryland...

The NRDC study, Reducing the National Energy Consumption of Set-Top Boxes, also found that today's average new cable high-definition digital video recorder (HD-DVR) consumes more electricity annually than the new flat panel TV to which it's typically connected and about 40% more than its basic set-top box counterpart. In contrast, cell phones, which also work on a subscriber basis with a need for secure connections, are able to use extremely low levels of power when not in use – primarily to preserve battery life.Full Report Here

New Technology Turns Windows Into Solar Panels

"A start-up in Northern California is working on creating 'solar windows' that could act as solar panels at the same time as blocking sunlight from entering office buildings to reduce their energy needs."

"A start-up in Northern California is working on creating 'solar windows' that could act as solar panels at the same time as blocking sunlight from entering office buildings to reduce their energy needs." The technology is a class of equipment that seeks to replace parts of buildings with solar panels to generate energy. Other possibilities include window awnings and roofing tiles.

Some of Pythagoras' windows are already installed 2MW's on Chicago's Willis Tower (formerly known as the Sears Tower.)

Read more

Flood Berm Collapses at Nebraska Nuclear Plant

The Fort Calhoun Nuclear Station shut down in early April for refueling, and there is no water inside the plant, the U.S. Nuclear Regulatory Commission said. Also, the river is not expected to rise higher than the level the plant was designed to handle. NRC spokesman Victor Dricks said the plant remains safe.

The Omaha Public Power District has said the complex will not be reactivated until the flooding subsides. Its spokesman, Jeff Hanson, said the berm wasn’t critical to protecting the plant but a crew will look at whether it can be patched.

Read more of AP article from Yahoo News

"Taxes on ‘Small Business’ Must Rise So Government Doesn’t Shrink" - Timothy Geithner

Ellmers: "Sixty-four percent of jobs that are created in this country are for small business."

Geithner conceded the point, but then suggested the administration's planned tax increase on small businesses would be "good for growth."

Geithner conceded the point, but then suggested the administration's planned tax increase on small businesses would be "good for growth."Not only that, he argued, but cutting spending by as much as the "modest change in revenue" (i.e. $1 trillion) the administration expects from raising taxes on small business would likely have more of a "negative economic impact" than the tax increases themselves would.

When Ellmers finally told Geithner that "the point is we need jobs," he responded that the administration felt it had "no alternative" but to raise taxes on small businesses because otherwise "you have to shrink the overall size of government programs"—including federal education spending.

"We're not doing it because we want to do it, we're doing it because we see no alternative to a balanced approach to reduce our fiscal deficits," said Geithner.

Read full at CNSNews.com

Low-Calorie, Low-Carb Diet is Sufficient to Reverse Type 2 Diabetes,

Seven of the 11 patients remained free of diabetes three months after the study, researchers said.

Type 2 diabetes, also known as adult-onset diabetes, has been thought to be a progressive, irreversible condition. Once diagnosed, some patients can control their diabetes with tablets, but many eventually require insulin injections.

One patient, 67-year-old Gordon Parmley, ate salad and vegetables and three diet shakes per day. “At first the hunger was quite severe and I had to distract myself with something else – walking the dog, playing golf – or doing anything to occupy myself and take my mind off food,” he said in a statement.

“But I lost an astounding amount of weight in a short space of time ... after six years, I no longer needed my diabetes tablets.”

MIT’s eSuperbike takes on the Isle of Man

HackDay -  While the Isle of Man typically plays host to an array of gas-powered superbikes screaming through villages and mountain passes at unbelievable speeds, the island's TT Race is a bit different. Introduced in 2009 to offer a greener alternative to the traditional motorcycle race, organizers opened up the course to electric bikes of all kind...Their entry into the race is the brainchild of PhD student [Lennon Rodgers] and his team of undergrads. They first designed a rough model of the motorcycle they wanted to build in CAD, and through a professor at MIT sourced some custom-made batteries for their bike.

While the Isle of Man typically plays host to an array of gas-powered superbikes screaming through villages and mountain passes at unbelievable speeds, the island's TT Race is a bit different. Introduced in 2009 to offer a greener alternative to the traditional motorcycle race, organizers opened up the course to electric bikes of all kind...Their entry into the race is the brainchild of PhD student [Lennon Rodgers] and his team of undergrads. They first designed a rough model of the motorcycle they wanted to build in CAD, and through a professor at MIT sourced some custom-made batteries for their bike.

While the team didn't take the checkered flag, they did finish the race in 4th place. Their bike managed to complete the course with an average speed of 79 mph, which isn't bad according to [Rodgers]. He says that for their first time out, he's happy that they finished at all, which is not something every team can claim. Read full at HackDay

Jun 26, 2011

New Process Allows Fuel Cells To Run On Coal

GizMag - Lately we're hearing a lot about the green energy potential of fuel cells, particularly hydrogen fuel cells. Unfortunately, although various methods of hydrogen production are being developed, it still isn't as inexpensive or easily obtainable as fossil fuels such as coal. Scientists from the Georgia Institute of Technology, however, have recently taken a step towards combining the eco-friendliness of fuel cell technology with the practicality of fossil fuels - they've created a fuel cell that runs on coal gas.

For some time now, it has been possible to operate solid oxide fuel cells using hydrocarbons. Those cells typically conk out in as little as half an hour, however, because carbon deposits have formed on their anodes in a clogging process known as "coking."

The Georgia Tech researchers have devised a vapor-deposition technique for growing nanostructures from barium oxide nanoparticles on those anodes. By absorbing moisture, the structures start a water-based chemical reaction, that oxidizes carbon deposits as they form. Using this approach, the team have been able to run coal gas-powered fuel cells for up to 100 hours, with no signs of carbon deposits.

Read more at GizMag also the research was recently published in the journal Nature Communications.

Asteroid To Pass Near Earth On Monday vs Debt & Moon

Economy Reality Check - U.S. Growth Outlook...Verge of a Great, Great Depression?

I would let the data speak for itself and let good judgement decide what you think.

U.S. Power-Grid Experiment - Y2K 2011 edition

Credibility questioned on claim of American infant deaths increased by 35% from Fukushima fallout

At first glance, the story looks credible. And scary. The information comes from a physician, Janette Sherman MD, and epidemiologist Joseph Mangano, who got their data from the Centers for Disease Control and Prevention's Morbidity and Mortality Weekly Reports—a newsletter that frequently helps public health officials spot trends in death and illness.

Look closer, though, and the credibility vanishes. For one thing, this isn't a formal  scientific study and Sherman and Mangano didn't publish their findings in a peer-reviewed journal, or even on a science blog. Instead, all of this comes from an essay the two wrote for Counter Punch, a political newsletter. And when Sci Am's Moyer holds that essay up to the standards of scientific research, its scary conclusions fall apart.

scientific study and Sherman and Mangano didn't publish their findings in a peer-reviewed journal, or even on a science blog. Instead, all of this comes from an essay the two wrote for Counter Punch, a political newsletter. And when Sci Am's Moyer holds that essay up to the standards of scientific research, its scary conclusions fall apart.

...why did the authors choose to use only the four weeks preceding the Fukushima disaster? Here is where we begin to pick up a whiff of data fixing. ... While it certainly is true that there were fewer deaths in the four weeks leading up to Fukushima than there have been in the 10 weeks following, the entire year has seen no overall trend. When I plotted a best-fit line to the data, Excel calculated a very slight decrease in the infant mortality rate. Only by explicitly excluding data from January and February were Sherman and Mangano able to froth up their specious statistical scaremongering.

You can see that data all plotted out nicely by Moyer over at Sci Am. How did these numbers get so heinously distorted? It's hard to say. But there should be some important lessons here. In particular, this is a good reminder that human beings do not always behave the way some economists think we do. We're not totally rational creatures. And profit motive is not the only factor driving our choices.

Antibiotic resistance.... that really is killing kids

A new strain of scarlet fever that's about twice as resistant to antibiotics as previous antibiotic-resistant strains. This is heart-wrenching. My thoughts are with the families in Hong Kong suffering through this outbreak.

Could "pervious concrete" be urban and industrial stormwater run off salvation?

Jun 25, 2011

Radiation By The Numbers... Nuclear energy is not biggest threat

Energy Saving Compressed Air Webinar on June 28

Are profits leaking from those lines? Compressed air may be free but the compression is not. Over-pressurized headers and tools, leaks, and compressor competition within a compressed air system cost money and energy over and over again as air is misused, wasted, or inefficiently produced. Learn how to save money and energy by checking and maintaining a compressed air system. Date: Tuesday, June 28, 2011

Time: 1:00 PM - 2:00 PM CDTReserve your Webinar seat now at:

https://www1.gotomeeting.com/register/762297377

Please register today and pass this invitation on to those who could benefit. Also, see booklet with case studies on these areas plus water conservation, Easy Material and Energy Savings,

http://nbdc.unomaha.edu/energy/energy_book.pdf

Expert: Shutting Down U.S. Nuclear Plants Would Have Daunting Effect on Economy, Environment

Given time and enough investment, some of the generation lost by shutting down nuclear plants could be made up by developing renewable resources and improving energy efficiency, but the size of the potential shortfall is daunting.

Shutting down nuclear power plants will have significant economic and environmental consequences, according to a new study by researchers at Carnegie Mellon University and DAI Management Consultants Inc. Shifting from nuclear to other types of power plants could affect the reliability of the electricity supply, electricity costs, air pollution, carbon emissions, and the reliance on fossil fuels like coal and natural gas, the researchers said...Additional statistics and an interactive tool to investigate the economic and environmental impacts of shutting nuclear power plants in the US can be downloaded here

Department of Agriculture Unsustainable Budget - greater than nation's net farm income

The Department of Agriculture budget of some $130 billion ... is a sum far greater than the nation's net farm income this year.

The Department of Agriculture budget of some $130 billion ... is a sum far greater than the nation's net farm income this year. In fact, the more the Agriculture Department has pontificated about family farmers, the more they have vanished -- comprising now only about 1 percent of the American population.

Net farm income is expected in 2011 to reach its highest levels in more than three decades, as a rapidly growing and food-short world increasingly looks to the United States to provide it everything from soybeans and wheat to beef and fruit. Somebody should explain that good news to the Department of Agriculture: This year it will give a record $20 billion in various crop "supports" to the nation's wealthiest farmers --  with the richest 10 percent receiving over 70 percent of all the redistributive payouts. If farmers on their own are making handsome profits, why, with a $1.6 trillion annual federal deficit, is the Department of Agriculture borrowing unprecedented amounts to subsidize them?

with the richest 10 percent receiving over 70 percent of all the redistributive payouts. If farmers on their own are making handsome profits, why, with a $1.6 trillion annual federal deficit, is the Department of Agriculture borrowing unprecedented amounts to subsidize them?

At least $5 billion will be in direct cash payouts. Yet no one in the USDA can explain why cotton and soybeans are subsidized, but not lettuce or carrots. In fact, 70 percent of all subsidies go to corn, wheat, cotton, rice and soybean farmers. Most other farmers receive no federal cash. Yet somehow peach, melon and almond growers seem to be doing fine without government checks in the mail.

Then there is the more than $5 billion in ethanol subsidies that goes to the nation's corn farmers to divert their acreage to produce transportation fuel. That program has somehow managed to cost the nation billions... read more from sourceOuch, as if Nuclear Energy could take another punch...

Here is this weeks run down:

- UK Sticks With Nuclear Power -"Despite recent events in Japan and the certain public outcry that it will generate, the UK government proposes to build new nuclear power stations. Well, earthquakes and tsunamis are very rare here."

Yucca Nuclear waste dump is mired in inertia $15 billion Hole to Nowhere. The license for the Yucca Mountain, which has been in development for nearly 30 years and cost more than $15 billion so far, has been in limbo since last June, when a licensing board independent of Jaczko and the rest of the commission rejected the Obama administration's request to withdraw the project application. Jaczko has yet to schedule a final vote from the five-member commission on the matter. (...) Jaczko knew his decision to shut down the technical review of Yucca Mountain, which would be used by the board to evaluate the license, "would be controversial and viewed as a policy decision for full commission consideration," the report says. "Therefore ... he strategically provided three of the four commissioners with varying amounts of information about his intention."

Yucca Nuclear waste dump is mired in inertia $15 billion Hole to Nowhere. The license for the Yucca Mountain, which has been in development for nearly 30 years and cost more than $15 billion so far, has been in limbo since last June, when a licensing board independent of Jaczko and the rest of the commission rejected the Obama administration's request to withdraw the project application. Jaczko has yet to schedule a final vote from the five-member commission on the matter. (...) Jaczko knew his decision to shut down the technical review of Yucca Mountain, which would be used by the board to evaluate the license, "would be controversial and viewed as a policy decision for full commission consideration," the report says. "Therefore ... he strategically provided three of the four commissioners with varying amounts of information about his intention."

- Radioactive Tritium Has Leaked from Three-Quarters of U.S. Commercial Nuclear Power Sites,often into groundwater from corroded, buried piping, an Associated Press investigation shows. The number and severity of the leaks has been escalating, even as federal regulators extend the licenses of more and more reactors across the nation. Tritium, which is a radioactive form of hydrogen, has leaked from at least 48 of 65 sites, according to U.S. Nuclear Regulatory Commission records reviewed as part of the AP’s yearlong examination of safety issues at aging nuclear power plants. Leaks from at least 37 of those facilities contained concentrations exceeding the federal drinking water standard — sometimes at hundreds of times the limit. While most leaks have been found within plant boundaries, some have migrated offsite.

- AP Investigation Concludes US Nuke Weakening Safety Rules "An investigation by the Associated Press has found a pattern of safety regulations being relaxed in order to keep aging nuclear power plants running. According to their investigation, when reactor parts fail or systems fall out of compliance with the rules, studies are conducted by the industry and government. The studies conclude that existing standards are 'unnecessarily conservative.' Regulations are loosened, and the reactors are back in compliance. — all of these and thousands of other problems linked to aging were uncovered in the AP's yearlong investigation. And all of them could escalate dangers in the event of an accident.'"

“Fukushima has now been classified as the biggest industrial catastrophe in the history of mankind,” with amount of radioactive fuel at Fukushima dwarfs Chernyobyl.

Arnie Gundersen has said that Fukushima is the worst industrial accident in history, and has 20 times more radiation than Chernobyl.Well-known physicist Michio Kaku just confirmed all of the above in a CNN interview:

In the last two weeks, everything we knew about that accident has been turned upside down. We were told three partial melt downs, don’t worry about it. Now we know it was 100 percent core melt in all three reactors. Radiation minimal that was released. Now we know it was comparable to radiation at Chernobyl. Radioactive materials spewed out from the crippled Fukushima No. 1 Nuclear Power Plant reached North America soon after the meltdown

The scientific journal Nature notes:The computer simulation by researchers at Kyushu University and the University of Tokyo, among other institutions, calculated dispersal of radioactive dust from the Fukushima plant beginning at 9 p.m. on March 14, when radiation levels around the plant spiked.

The team found that radioactive dust was likely caught by the jet stream and carried across the Pacific Ocean, its concentration dropping as it spread. According to the computer model, radioactive materials at a concentration just one-one hundred millionth of that found around the Fukushima plant hit the west coast of North America three days later, and reached the skies over much of Europe about a week later.

Shortly after a massive tsunami struck the Fukushima Daiichi nuclear power plant on 11 March, an unmanned monitoring station on the outskirts of Takasaki, Japan, logged a rise in radiation levels. Within 72 hours, scientists had analysed samples taken from the air and transmitted their analysis to Vienna, Austria — the headquarters of the Preparatory Commission for the Comprehensive Nuclear-Test-Ban Treaty Organization (CTBTO), an international body set up to monitor nuclear weapons tests.

It was just the start of a flood of data collected about the accident by the CTBTO's global network of 63 radiation monitoring stations. In the following weeks, the data were shared with governments around the world, but not with academics or the public.

Jun 24, 2011

NASA - Getting Ready for the Next Big Solar Storm

As 2011 unfolds, the sun is once again on the eve of a below-average solar cycle—at least that’s what forecasters are saying. The "Carrington event" of 1859 (named after astronomer Richard Carrington, who witnessed the instigating flare) reminds us that strong storms can occur even when the underlying cycle is nominally weak. In 1859 the worst-case scenario was a day or two without telegraph messages and a lot of puzzled sky watchers on tropical islands.

As 2011 unfolds, the sun is once again on the eve of a below-average solar cycle—at least that’s what forecasters are saying. The "Carrington event" of 1859 (named after astronomer Richard Carrington, who witnessed the instigating flare) reminds us that strong storms can occur even when the underlying cycle is nominally weak. In 1859 the worst-case scenario was a day or two without telegraph messages and a lot of puzzled sky watchers on tropical islands.In 2011 the situation would be more serious....

An avalanche of blackouts carried across continents by long-distance power lines could last for weeks to months as engineers struggle to repair damaged transformers. Planes and ships couldn’t trust GPS units for navigation. Banking and financial networks might go offline, disrupting commerce in a way unique to the Information Age. According to a 2008 report from the National Academy of Sciences, a century-class solar storm could have the economic impact of 20 hurricane Katrinas.

As policy makers meet to learn about this menace, NASA researchers a few miles away are actually doing something about it:

"We can now track the progress of solar storms in 3 dimensions as the storms bear down on Earth," says Michael Hesse, chief of the GSFC Space Weather Lab and a speaker at the forum. "This sets the stage for actionable space weather alerts that could preserve power grids and other high-tech assets during extreme periods of solar activity."

... This kind of "interplanetary forecast" is unprecedented in the short history of space weather forecasting. "This is a really exciting time to work as a space weather forecaster," says Antti Pulkkinen, a researcher at the Space Weather Lab. "The emergence of serious physics-based space weather models is putting us in a position to predict if something major will happen." Read more at NASA and more information may be found at the SWEF 2011 home page.

11-Year-Old Pilots 1,325 MPG Concept Car

"Hypermiling vehicles depend on ultra-efficient engines and low weight to go the distance, so Cambridge Design Partnership selected 11-year-old Cambreshire student Kitty Foster as the pilot their new 1,325 MPG car. The vehicle incorporates a highly modified lightweight oxygen concentrator that was originally developed for the Ministry of Defense to treat injured soldiers." - SlashDot This system, powered by an innovative micro-diesel-engine, removed the need to take heavy and potentially explosive oxygen canisters onto the battlefield. The project involved Cambridge Design Partnership’s evaluation of a variety of miniature engines, one of which was selected to power this remarkable new vehicle. The car also features low friction tyres to increase mileage and was tracked using Cambridge Design Partnership’s ‘Go’ real-time tracking service.Full here

"Hypermiling vehicles depend on ultra-efficient engines and low weight to go the distance, so Cambridge Design Partnership selected 11-year-old Cambreshire student Kitty Foster as the pilot their new 1,325 MPG car. The vehicle incorporates a highly modified lightweight oxygen concentrator that was originally developed for the Ministry of Defense to treat injured soldiers." - SlashDot This system, powered by an innovative micro-diesel-engine, removed the need to take heavy and potentially explosive oxygen canisters onto the battlefield. The project involved Cambridge Design Partnership’s evaluation of a variety of miniature engines, one of which was selected to power this remarkable new vehicle. The car also features low friction tyres to increase mileage and was tracked using Cambridge Design Partnership’s ‘Go’ real-time tracking service.Full here Jun 23, 2011

Smoking vs Sitting

“Smoking certainly is a major cardiovascular risk factor and sitting can be equivalent in many cases,” explained Dr. David Coven.

Dr. Coven is a cardiologist. He says several new studies show prolonged sitting is now being linked to increased risk of heart disease, obesity, diabetes, cancer, and even early death.

“The fact of being sedentary causes factors to happen in the body that are very detrimental,” said Dr. Coven.

Dr. Coven says when you sit for long periods of time; your body goes into storage mode,

When that happens, it stops working as effectively as it should. What’s worse, the more hours a day you sit, the greater your likelihood of developing one or more of these diseases, just as with smoking.

Linda Caufield has a desk job. She sits nearly seven hours a day.

“I’m on the computer, I’m on the phone, I’m doing paperwork so all that stuff has to be done at my desk,” she said.

So get up at the office at any chance you get, don’t send emails when you can deliver the message in person, take the stairs, stand up when you take a phone call. And don’t forget to take your breaks, and take a walk.

To view research from American College of Cardiology click here.

Congressional Budget Office show debt rising to 190% of GDP

New figures released Wednesday by the Congressional Budget Office show debt rising to 190 percent of GDP by 2035. The annual long-term budget outlook forecasts a surge in public debt this year that will rise to 70 percent of GDP by the end of fiscal year 2011 compared to 62 percent by the end of 2010. The CBO offers two forecasts, both of which show the nation's finances deteriorating over the next quarter century. CBO - Report here (PDF)

New figures released Wednesday by the Congressional Budget Office show debt rising to 190 percent of GDP by 2035. The annual long-term budget outlook forecasts a surge in public debt this year that will rise to 70 percent of GDP by the end of fiscal year 2011 compared to 62 percent by the end of 2010. The CBO offers two forecasts, both of which show the nation's finances deteriorating over the next quarter century. CBO - Report here (PDF)Also see the following documents have been added to CBO's Web site:

- Federal Budget Math: We Can't Repeat the Past

CBO Director Doug Elmendorf's presentation to the Federal Reserve Bank of New York. - Cost Estimate for H.R. 470, Hoover Power Allocation Act of 2011

Cost estimate for the bill as ordered reported by the House Committee on Natural Resources on June 15, 2011 - CBO's 2011 Long-Term Budget Outlook: Testimony Before the House Budget Committee

Pocket Particle Accelerators Like This One Could Bring Safer Nuclear Power to Neighborhoods

A wee particle accelerator in the English countryside could be a harbinger of a safer, cleaner future of energy. Specifically, nuclear energy, but not the type that has wrought havoc in Japan and controversy throughout Europe and the U.S. It would be based on thorium, a radioactive element that is much more abundant, and much more safe, than traditional sources of nuclear power.

Some advocates believe small nuclear reactors powered by thorium could wean the world off coal and natural gas, and do it more safely than traditional nuclear. Thorium is not only abundant, but more efficient than uranium or coal - one ton of the silver metal can produce as much energy as 200 tons of uranium, or 3.5 million tons of coal, as the Mail on Sunday calculates it.

The newspaper took a tour of a small particle accelerator that could be used to power future thorium reactors. Nicknamed EMMA - the Electron Model of Many Applications - the accelerator would be used to jump-start fissile nuclear reactions inside a small-scale thorium power plant.Thorium reactors would not melt down, in part because they require an external input to produce fission. Thorium atoms would release energy when bombarded by high-energy neutrons, such as the type supplied in a particle accelerator.

Providing that stimulus is one obstacle to building small thorium reactors - but a new generation of accelerators like EMMA, and someday potentially even smaller, luggage-sized ones - could do the job.

EMMA is the first non- scaling, fixed-field, alternating-gradient (NS-FFAG) accelerator, qualities that make it easier to operate and maintain, more reliable and compact, more flexible and more efficient, according to British researchers. Other particle accelerators use alternating electric fields, which require special safety measures to guard against microwave exposure, for instance. EMMA's alternating magnetic field gradients are a more efficient and cheaper way to accelerate particles to higher energies. (Brookhaven National Laboratory explains in more detail here.)

EMMA operates at operates around 20 MeV, or 20 million electronvolts, a paltry amount for an atom accelerator. The Tevatron, for instance, accelerates particles to 1 tera-electron volts. The Large Hadron Collider is designed to speed them to 7 TeV. But thorium reactors would not need such high energies to initiate fusion.

British scientists are already working on a successor called PAMELA, the Particle Accelerator for Medical Applications, which will be used to treat cancer.

Click through to the Mail for a full tour of EMMA, its sister apparatus ALICE (Accelerators and Lasers In Combined Experiments), and a description of British efforts to produce thorium power.

My favorite comment from post:

Every couple of months we have a new technology that is going to revolutionise energy generation and save the planet (fusion reactors, genetically engineered algae - producing ethanol, high flying wind turbines, now this - thorium reactors, probably a load of other ideas ...). We also have some of the best wind and tidal resources in the world. Why on earth aren't we blazing away and revelling in cutting edge technology and embracing the potential that we have? Ah yes, it's because our glorious leaders are too incompetent and incapable of inspiring us. Plus their close friends in big business would rather we carried on paying extortionate prices, through a policy of scare mongering and the maintenance of cartels. To summarise; the rich carry on getting ever richer at the expense of the rest of us. Never mind, China or the USA will develop the technology and then sell it back to us at the usual insane price that we'll be happy to pay.- The von Horn, Shambury, Oxfordshire

Jun 20, 2011

Ouch... U.S. Import and Export Price Indexes - BLS

Excerpt: An analysis of the terms of trade index for the United States during the past 20 years indicates that the index has tended to vary by less than 10 percent. A spike in the import price index in 2008 (driven by sharply higher prices for fuels) was offset to some extent by a jump in the export price index (related to higher grain prices). (See chart 1.)Given the recent surge in market prices for petroleum as well as grains, the terms of trade for the United States will bear watching. Also see WSJ - "The Economy Is Worse Than You Thin"

Excerpt: An analysis of the terms of trade index for the United States during the past 20 years indicates that the index has tended to vary by less than 10 percent. A spike in the import price index in 2008 (driven by sharply higher prices for fuels) was offset to some extent by a jump in the export price index (related to higher grain prices). (See chart 1.)Given the recent surge in market prices for petroleum as well as grains, the terms of trade for the United States will bear watching. Also see WSJ - "The Economy Is Worse Than You Thin"Record global corn harvests will fail to meet demand for food, fuel and livestock feed

Even a fifth consecutive year of record global corn harvests will fail to meet demand for food, fuel and livestock feed, reducing world stockpiles to the lowest in two generations...Corn may jump 36 percent to a record $9 a bushel if conditions worsen, Morgan Stanley says...“There is a storm developing in agriculture,” said Jean Bourlot, global head of commodities at UBS AG in London. “If we have the slightest disruption in any part of the world, the effect on the price will be considerable.” Please read more at BloomBerg

Even a fifth consecutive year of record global corn harvests will fail to meet demand for food, fuel and livestock feed, reducing world stockpiles to the lowest in two generations...Corn may jump 36 percent to a record $9 a bushel if conditions worsen, Morgan Stanley says...“There is a storm developing in agriculture,” said Jean Bourlot, global head of commodities at UBS AG in London. “If we have the slightest disruption in any part of the world, the effect on the price will be considerable.” Please read more at BloomBerg DOE Highlights New Global Energy Efficiency ISO 50001 Standard

This voluntary standard, developed by a project committee of 45 partnering countries from the International Organization for Standardization (ISO), provides organizations with a framework for continuous energy performance improvements. The framework will encourage adoption of best practices that reduce the energy use of existing equipment and facilities, require the use of energy performance data to target cost-effective upgrades, and emphasize the design and installation of highly efficient energy systems and equipment. By increasing their operational efficiency, organizations that adopt the ISO 50001 standard will save money by saving energy.

Please see full story at EERE

Extension of H.R. 908, Chemical Facility Anti-Terrorism Standards Act

Jun 19, 2011

How much gas is left - InfoGraphic

How Much Gas Is Left?

Natural gas – how much is left, who’s got it and how supplies oddly mirror certain geo-politic tensions. Another little interactive visual of ours.

10,000's of chemicals = Average Human Has 60 New Genetic Mutations

Osage Oppose Wind Power At Tallgrass Prairie

in general, has found significant reasons to oppose wind farms on the tallgrass prairie, 'a true national treasure' whose last small fragments remain only in Osage County and in Kansas. The Osage County wind farms would not be built in the Nature Conservancy's Tallgrass Prairie Preserve, located northeast of Ponca City, but would be visible from it and Preserve Director Bob Hamilton has urged the county and the state to steer wind development to areas of the county that are not ecologically sensitive. 'Not all areas in the Osage are sensitive,' says Hamilton. 'What makes the tallgrass prairie so special is its big landscape. It's not just local — it has global significance.' The Osage also fear that large wind farms will interfere with extracting oil and gas, from which royalties are paid in support of tribal members as the Osage retain their tribal mineral rights owned in common by members of the tribe. 'They weren't thinking about the mineral estate — just about compensating landowners,' says Galen Crum, chairman of the tribal Minerals Council. 'How are we supposed to know the price of oil in 50 years?'" - SlashDot

in general, has found significant reasons to oppose wind farms on the tallgrass prairie, 'a true national treasure' whose last small fragments remain only in Osage County and in Kansas. The Osage County wind farms would not be built in the Nature Conservancy's Tallgrass Prairie Preserve, located northeast of Ponca City, but would be visible from it and Preserve Director Bob Hamilton has urged the county and the state to steer wind development to areas of the county that are not ecologically sensitive. 'Not all areas in the Osage are sensitive,' says Hamilton. 'What makes the tallgrass prairie so special is its big landscape. It's not just local — it has global significance.' The Osage also fear that large wind farms will interfere with extracting oil and gas, from which royalties are paid in support of tribal members as the Osage retain their tribal mineral rights owned in common by members of the tribe. 'They weren't thinking about the mineral estate — just about compensating landowners,' says Galen Crum, chairman of the tribal Minerals Council. 'How are we supposed to know the price of oil in 50 years?'" - SlashDotWater Is the New Texas Liquid Gold

The Eagle Ford's peculiar geology means each well fracked requires an amount of water equivalent to that used by 240 adults in an entire year for cooking, washing, and drinking. A study by the Texas Water Development Board and the University of Texas at Austin's Bureau of Economic Geology estimates fracking-water demand in the area will jump tenfold by 2020 and double again by 2030. "This is not the drilling your grandparents knew in West Texas," says Sharon Wilson, an organizer for Earthworks' Oil & Gas Accountability Project, which lobbies for tougher regulation of oil drillers. For now, local water departments, farmers, and oil drillers near Laredo are relying on water from two reservoirs and underground aquifers filled by last summer's tropical storm season. But that won't last forever.

Military Drone Attacks Are Not 'Hostile'?

Not satisfied with the legal conclusion of the DOJ, the Obama administration found other in-house lawyers willing to declare a bomb dropped from a drone is not 'hostile'. The strange conclusion has big implications in determining the President's compliance with the law. If drone strikes are in fact hostile and the Libyan campaign continues past Sunday, he may very well be breaking the law." - SlashDot

Not satisfied with the legal conclusion of the DOJ, the Obama administration found other in-house lawyers willing to declare a bomb dropped from a drone is not 'hostile'. The strange conclusion has big implications in determining the President's compliance with the law. If drone strikes are in fact hostile and the Libyan campaign continues past Sunday, he may very well be breaking the law." - SlashDotHaase Note:

Every gun that is made, every warship launched, every rocket fired signifies, in the final sense, a theft from those who hunger and are not fed, those who are cold and are not clothed. This world in arms is not spending money alone. It is spending the sweat of its laborers, the genius of its scientists, the hopes of its children. This is not a way of life at all, in any true sense. Under the cloud of threatening war, it is humanity hanging from a cross of iron. - Dwight D. Eisenhower

Also see:

Eisenhower's worst fears came true. We invent enemies to buy the bombs

Fire and Flood at Nebraska nuclear plant remains closed; details remain few

On June 6th, the Federal Administration Aviation issued a directive banning aircraft from entering the airspace within a two-mile radius of the plant.

"No pilots may operate an aircraft in the areas covered by this NOTAM," referring to the "notice to airmen," effective immediately.

Since last week, the plant has been under a "notification of unusual event" classification, becausing of the rising Missouri River. That is the lowest level of emergency alert.

The OPPD claims the FAA closed airspace over the plant because of the Missouri River flooding. But the FAA ban specifically lists the Fort Calhoun Nuclear Power Plant as the location for the flight ban.

local NBC affiliate, reports on its website

- Nebraska Nuclear Plant at Level 4 Emergency

- Electrical Fire Knocks Out Spent Fuel Cooling at Nebraska Nuclear Plant

- Fort Calhoun Nuclear Power Plant Spent Fuel Pool Cooling System Stopped Working For Hours

- Nebraska Nuclear Plant Lost Cooling System After Fire

- NRC Monitors Second Event at Neb. Nuclear Plant Following Fire, Disruption of Spent-Fuel Cooling

- No Fly Zone Over Fort Calhoun Nuclear Plant Due to “Hazards”

- Dam Danger, Flooding and Ft. Calhoun Nuclear Power Plant

- Electrical Fire Knocks Out Spent Fuel Cooling at Nebraska Nuclear Plant

- NRC monitors declared fire alert, flood dangers at Fort Calhoun nuclear station